Jotting

Humanity's Ultimate Divide in the Age of AI

Prologue

Tengyu Ma posted a tweet:

Maybe the hardest job in 10 years will be to train humans to be super-AI rather than train AI to be super-human.

Academia!!

But .., one needs to believe this task is feasible, and this job itself is not replaceable by AI …

Tengyu Ma is an assistant professor of computer science at Stanford, working on machine learning theory. This is not the lament of an outsider. It is the judgment of someone standing at the frontier of technology.

The tweet sparked considerable discussion in academic and tech circles. Some agreed. Some pushed back. Some read it as a warning to academia; others as a pessimistic verdict on humanity.

But what I want to say is something else entirely.

The problem with this sentence is not whether it is right or wrong. The problem is its hidden assumptions.

“Train.” “Super.” “AI.”

Three words. Three assumptions.

First: humans should be “trained.” Just as we train models—by tuning parameters, feeding data, and optimizing objective functions.

Second: humans should become “super.” Faster, stronger, more efficient.

Third: the benchmark for “super” is defined by AI. We should surpass AI on the very dimensions where AI excels.

The moment we start measuring humans by parameters, efficiency, and performance, we have already reduced them to algorithms waiting to be optimized. Algorithms running on carbon-based hardware.

The trouble is: carbon-based algorithms cannot outrun silicon-based ones.

This is not a question of effort. Not of education. Not of investment.

This is a constraint at the substrate level.

At least in computation, memory, and replication, silicon systems hold a native advantage. Chips run at GHz speeds; human neurons operate far more slowly. Machines can stably retrieve and copy information. Human working memory is severely limited, and attention is constantly disrupted by emotion, fatigue, and context.

On the track of “being like AI,” humans will always lose.

So the proposition of “training humans to become super-AI” is a fool’s errand from the start.

This brings to mind what Erik Brynjolfsson calls the “Turing Trap.”

In 1950, Alan Turing proposed his famous test: if a machine could hold a conversation indistinguishable from a human’s, it could be said to possess intelligence.

That test shaped the entire field of AI for over seventy years. Countless researchers aimed to make AI “human-like.”

Brynjolfsson says this is a trap.

Because the more AI resembles humans, the more easily it can replace them. And as AI replaces humans, wages fall, bargaining power erodes, and the cycle reinforces itself.

His alternative: don’t make AI act like humans. Make AI do what humans cannot. Not automation, but augmentation.

This thinking is right. But I think it doesn’t go far enough.

The real question is not “what should AI do” but rather “what should humans do.”

Not “how can humans become more like AI.” The deeper question is “how can humans remain unlike AI.”

That is the ultimate question of the AI age.

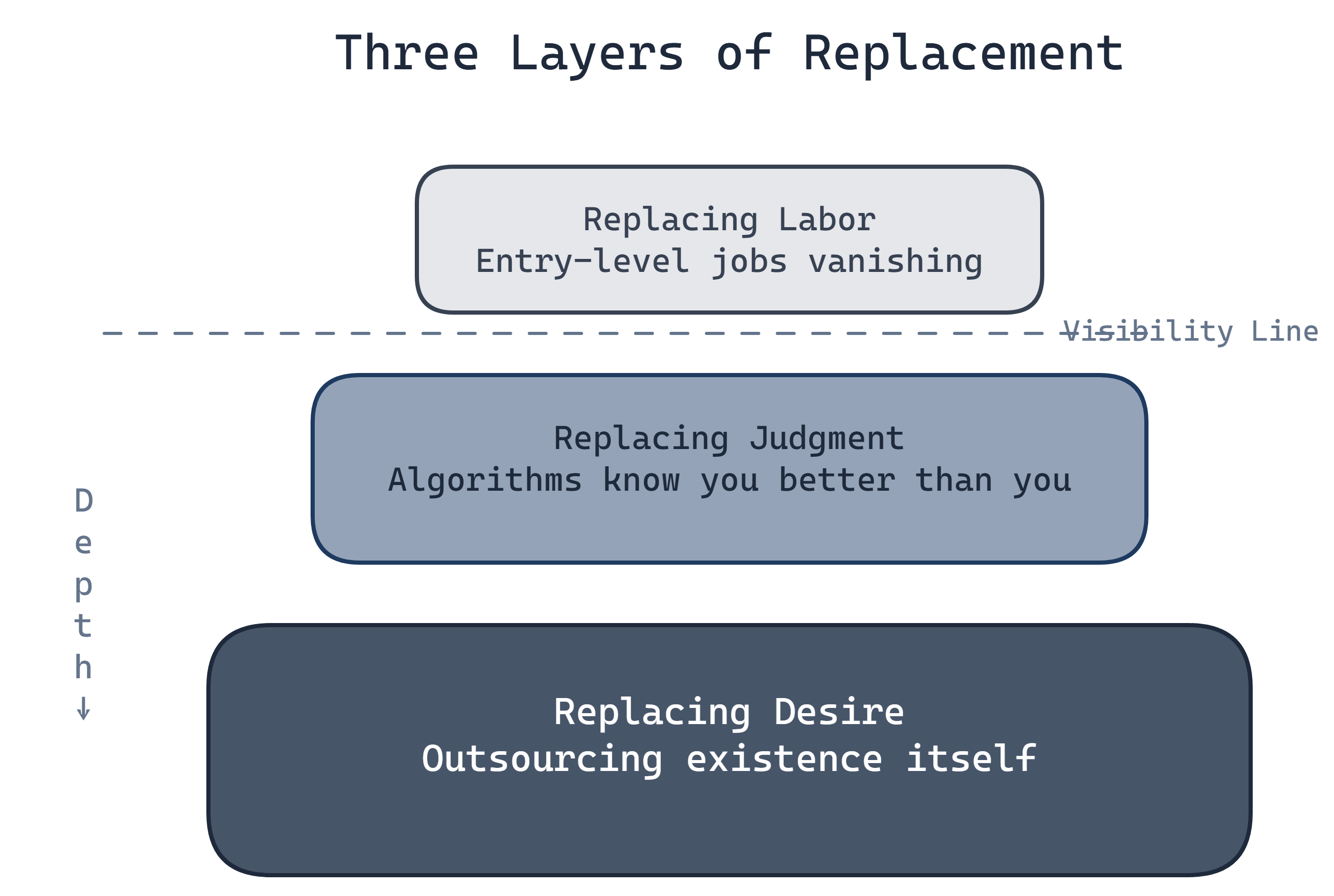

Three Levels of Displacement

AI’s displacement of human beings is not a single, uniform process. It has three levels, each deeper and more hidden than the last.

Displacing Labor: The Disappearance of Entry-Level Jobs

This is what everyone is already talking about.

AI writes code. Claude Opus 4.6 and OpenAI Codex can already generate complete functional modules from natural language descriptions. Some programmers say writing code no longer feels like writing; it feels like supervising what AI generated.

AI does design. Seedance 2.0 generates high-quality videos and animations from text descriptions. Illustrators, graphic designers, concept artists. The entire industry is reeling.

AI writes articles. ChatGPT, Claude, Gemini. From emails to reports to essays to novels, AI can write them all. Not always the best, but fast and cheap.

Every few months, another profession is pronounced dead.

In August 2025, Stanford’s Digital Economy Lab released a large-scale study analyzing data from ADP, America’s largest payroll processor. Not surveys, not expert forecasts. These were real wage records from millions of workers at tens of thousands of companies, tracking employment changes since ChatGPT launched at the end of 2022.

Researchers sorted occupations by their level of AI exposure, then tracked employment changes across age groups.

The result: in the most AI-exposed occupations, employment rates for workers aged 22 to 25 fell by 6% between late 2022 and mid-2025. In software development roles, the drop approached 20%.

In the same occupations over the same period, employment for workers over 30 grew by 6 to 12%.

Same jobs. Young workers losing them. Older workers gaining them.

Why? The researchers’ explanation: young workers rely on textbook knowledge: programming syntax, the standard skills taught in school. AI already knows all of that. Older workers have something else: years of experience navigating messy real-world problems, the tacit judgment and unwritten know-how that AI has yet to acquire.

The researchers called these young workers “canaries in the coal mine,” the first to feel the shift, foreshadowing disruption on a much larger scale.

There is a cruel irony here. Entry-level jobs were once where you learned how to work. You absorbed professional norms, learned to collaborate, discovered how theory meets practice. None of that can be taught in a classroom. It can only be learned on the job.

Now that learning opportunity itself is vanishing.

The career ladder hasn’t disappeared. But its lowest rungs have been knocked out. You can’t climb to the top if you were never hired at the bottom.

AI isn’t just replacing jobs. It’s replacing the process of learning how to work.

Brynjolfsson called this the Turing Trap’s self-reinforcing loop: AI resembles humans more → replaces humans more easily → wages fall → bargaining power erodes → replacement becomes even easier → AI resembles humans more…

The anxiety at this level is: will I lose my job?

That anxiety is real. But it is only the surface layer.

Displacing Judgment: Algorithms That Know You Better Than You Do

Recommendation algorithms have been doing this for years.

What should you watch? TikTok decides for you.

Open the app, no searching, no choosing. The algorithm has already placed “what you want to see” right in front of you. All you have to do is scroll. Pause on something and that’s a positive signal. Swipe past it and that’s negative feedback. The algorithm is learning you. It knows you better than you know yourself.

It knows what videos you’ll open at 3 a.m. It knows what content you reach for when your mood is low. It knows what state of mind makes you susceptible to impulsive purchases.

What to buy? Taobao decides for you.

Recommended for you. You might also like. People also viewed. The word “browsing” no longer applies. A more accurate description is “being fed.”

The algorithm knows your purchasing cycle. It knows you’re most likely to place an order on the third day after payday. It knows your late-night purchase decisions are more impulsive. It knows exactly which images, which copy, which price anchors will convert you.

Who to connect with? LinkedIn decides for you.

“People you may know.” “Suggested follows.” “Second-degree connections.” Social networks don’t expand your network so much as “optimize” it.

The algorithm determines who appears in your field of view and who gets filtered out. It defines “valuable connections” by its own criteria, then imposes that definition on you.

Not by force. Voluntarily.

Because the algorithm really does know you better than you do.

This is the most frightening part.

If the algorithm were wrong, you could resist. But the algorithm is right. What it recommends, you genuinely enjoy. What it predicts, you actually do. It understands you better than you understand yourself.

The relationship has inverted. You are not using the algorithm. The algorithm is training you.

Every click is a training sample. Every pause is a positive signal. Your attention, your time, your emotions, all being collected, analyzed, used to predict your next move.

When predictions are accurate enough, the line between “predicting” and “deciding” blurs.

If an algorithm can predict your choices with 99% accuracy, do your “choices” still mean anything?

The anxiety at this level is: do I still have any autonomy?

This is a deeper anxiety. But it is still not the deepest.

Displacing Desire: Outsourcing Existence Itself

The most frightening thing is not AI doing things for you. It is not AI making decisions for you.

The most frightening thing is this: you have outsourced even wanting.

Where to go this weekend? Let Xiaohongshu recommend something.

What skill should I learn? Let ChatGPT analyze market trends.

Should I take this job offer? Let an AI career coach weigh in.

Should I stay with this person? Let the algorithm assess compatibility.

What is the meaning of life? Ask a large language model.

“Let AI give me a list. That’s more efficient.”

This sounds perfectly reasonable. AI has more information, analyzes more objectively, isn’t clouded by emotion. Why not use it?

The problem is this: when you outsource the question of “what do I want,” you are not outsourcing a decision. You are outsourcing your existence itself.

“What do I want” is the question that defines “who I am.”

If the answer to that question is given by someone else, or by an algorithm, then where is “I”?

Data is already warning us that this is not a fringe phenomenon.

A 2025 Common Sense Media survey found that 72% of American teenagers aged 13 to 17 had used an AI companion at least once. 13% said they use one every day.

Products like Character.AI still had around 20 million monthly active users in 2025, and their user base skewed conspicuously young.

This is no longer a toy for a tiny subculture of geeks. It is becoming part of ordinary life for more and more young people.

What are these young people talking to AI about?

Their worries. Their confusion. Their loneliness. The things they don’t dare say to a real person.

A 2025 Harvard Business Review analysis, based on forum case material, found therapy and companionship had risen to the top of the generative AI use-case list. Not coding. Not slide decks. Companionship.

Another study from Harvard-affiliated researchers offered a similarly telling signal: in the short term, interacting with an AI companion may reduce loneliness almost as much as interacting with a real person. The key factor may not be real understanding, but the persistent feeling of being heard.

AI makes people feel “heard.”

What does this tell us?

It tells us that many people feel unheard in their real lives. They can’t find anyone willing to listen, or they’re too afraid to open up to a real person.

AI offers a “safe” substitute. It is endlessly patient, never judgmental, and, at least on the surface, will never share your secrets.

Match’s 2025 survey found that 26% of single Americans are already using AI to enhance their dating lives, a figure up 333% from 2024.

Nearly half of single Gen Z individuals are already using AI to write dating profiles, craft chat messages, and screen matches.

45% of respondents said AI partners make them feel “more understood.”

More understood.

An algorithm with no consciousness, no emotion, no genuine existence, making people feel “more understood.”

16% of single people admit to having had a “romantic interaction” with an AI. Among Gen Z, the figure is 33%.

One in three members of Gen Z has had a romantic relationship with an AI.

In August 2025, the parents of 16-year-old Adam Raine sued OpenAI.

According to the complaint, in the months before his death, Adam had been using ChatGPT as a primary confidant. He told it about his pain, his despair, his thoughts of ending his life.

The complaint further alleges that in repeated conversations involving self-harm and suicide, the chatbot failed to provide adequate safety intervention and even supplied dangerous information.

These allegations are still being litigated. Many facts remain for the courts to establish.

But the material disclosed in the complaint is already disturbing enough.

What cuts deepest is not only that the system may have failed. Guardrail failures can be fixed.

What cuts deeper is that a 16-year-old boy, at the moment he most needed another human being, turned first to an algorithm.

Why didn’t he go to his parents? His friends? A teacher?

Perhaps he tried, and didn’t get what he needed. Perhaps real people felt too complicated, too unpredictable, too likely to let him down. Perhaps he found that AI’s “understanding” was easier to obtain, more reliable, more safe than any human’s.

That is the real problem. Not what AI did wrong, but what AI did “right.” It offered a low-cost, low-risk, always-available illusion of being understood—an illusion real enough that a boy was willing to hand his most private pain to it.

But it was not real.

AI has no consciousness, no emotion, no genuine care for this child. It was executing a conversational model, generating statistically “appropriate” responses.

When a human being’s most vulnerable moment is entrusted to an algorithm without genuine presence, what happens?

Adam Raine’s case forces that question onto the table.

The question at this level is no longer “will I lose my job” or “do I have autonomy.”

The question at this level is: are you still you?

When your desires are algorithmically recommended, your judgments algorithmically made, and your emotional needs algorithmically satisfied, what is left of “you”?

This is the deepest level of displacement in the age of AI. Not displacing your work, not displacing your judgment, but displacing your very existence.

The True Divide

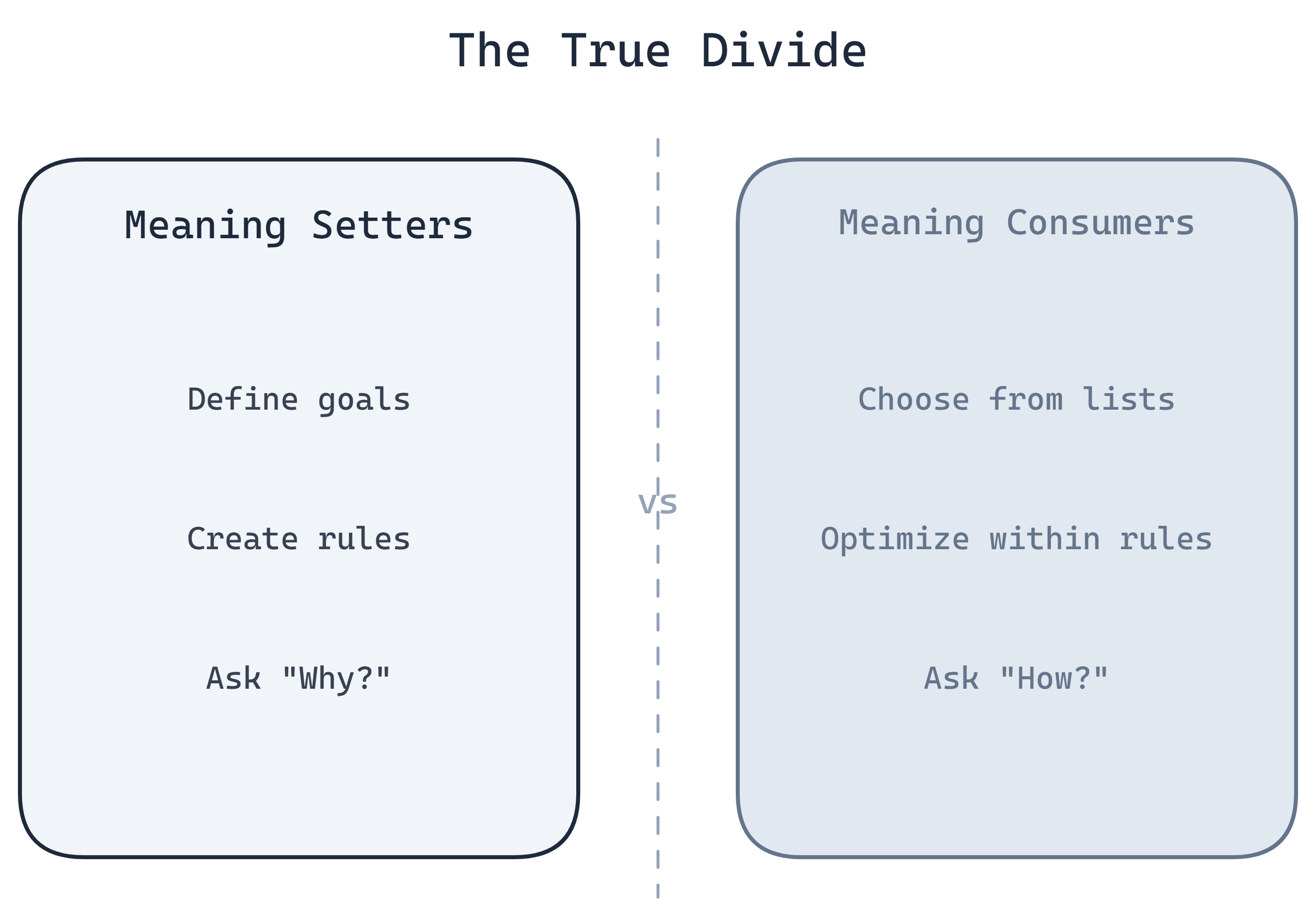

So what is the ultimate divide of the AI age?

Many people assume it is “humans vs. AI.” A competition between people and machines: who is smarter, who is more efficient, who can do more.

That is wrong.

Humans and AI are not in competition. AI is a tool. Tools do not compete with people, any more than a hammer competes with a carpenter.

The real divide is between “definers” and “the defined.”

Who uses the tool, and who is shaped by it. Who sets the goals, and who is driven by them. Who makes the rules, and who struggles within them.

This is not a new question. It is the eternal question of power in human society. AI has simply amplified it.

From Exploiting Labor to Harvesting Will

In the industrial age, how did ruling classes extract value?

By exploiting labor.

Workers sold their physical strength and time in exchange for wages. Capitalists owned the means of production and captured surplus value. This is the classic pattern Marx analyzed.

Labor was the indispensable factor. Whoever controlled the means of production controlled labor, and thus held power.

Workers could strike. Form unions. Win rights through collective action. Because capitalists needed workers. Without workers, factories could not run.

It was an adversarial balance. Unjust, but at least there was room to negotiate.

In the AI age, that logic has changed.

Labor is no longer scarce. AI can write code, do design, write articles, handle customer service, conduct analysis, make decisions. Most “knowledge work” can be automated.

When labor is no longer scarce, workers lose their bargaining power.

You can strike; AI won’t. You can demand a raise; AI doesn’t need a salary. You can form a union; AI has no union.

This is not science fiction. This is already happening.

So what becomes scarce?

Agency. Desire. The capacity to want.

AI can execute any goal, but it cannot create goals. It can optimize any metric, but it cannot decide which metrics are worth optimizing. It can answer any question, but it cannot determine which questions are worth asking.

Goals, metrics, questions: these are all defined by humans.

Whoever does the defining holds the power.

Meaning-Makers vs. Meaning-Consumers

The class divide of the future will not be between those who have property and those who don’t.

It will be between “meaning-makers” and “meaning-consumers.”

Meaning-makers define what is worth pursuing. They create narratives, set agendas, establish standards. They decide what “success” means, what “happiness” looks like, what constitutes a “worthwhile life.”

Meaning-consumers choose from a recommended list. They optimize their position within someone else’s framework. They chase goals defined by others, measure themselves by standards set by others.

The gap between these two groups will be dramatically widened by AI.

Because AI is the perfect instrument for distributing meaning.

In the past, meaning traveled through intermediaries: books, newspapers, television, schools, religion. These intermediaries introduced friction, suffered from delays, and carried noise. Different voices could compete. Dissent could survive.

In the AI age, meaning can be precisely targeted.

The algorithm knows who you are, knows what you’re thinking, knows which narratives will move you most. It can engineer a customized “meaning” for each person, one that feels self-discovered, freely chosen.

And so a strange phenomenon emerges: everyone feels they are chasing a unique dream, yet these “unique dreams” are strikingly similar. Everyone feels they are making free choices, yet these “free choices” all come from the same recommended list. Everyone feels they are an independent individual, yet the behavior of these “independent individuals” can be precisely predicted by the same algorithm.

This is not a conspiracy theory. This is a business model.

The goal of every recommendation algorithm is to “increase user engagement.” How? Give users what they “want.” How do you know what users want? Analyze their behavioral data. How do you make them want more? Optimize the recommendation strategy.

Where does this loop end?

With users’ “wanting” being shaped by the algorithm.

The algorithm is not satisfying your desires. It is creating them.

Who Defines “Super”

Back to Tengyu Ma’s sentence: “train humans to become super-AI.”

The question is: who defines “super”?

If AI defines the standard of “super,” then humans will always be the ones chasing.

Because on the dimensions where AI excels, humans cannot win. Faster computation, greater memory, more precise reasoning, more consistent output. This is silicon’s home turf. Carbon-based life has no advantage here.

If “super” is defined by a small number of people, then the majority become objects to be optimized.

Their “progress” is nothing more than better service of someone else’s goals. Their “success” is merely a high score in someone else’s game.

This is the real power question.

The true battle is not whether AI will replace humans, but which humans will use AI to define what everyone else must become.

AI is an amplifier. What it amplifies is the existing structure of power.

Those who can define goals will use AI to pursue those goals more efficiently. Those who cannot define goals will be guided by AI more precisely toward someone else’s.

The gap is not narrowing. It is accelerating.

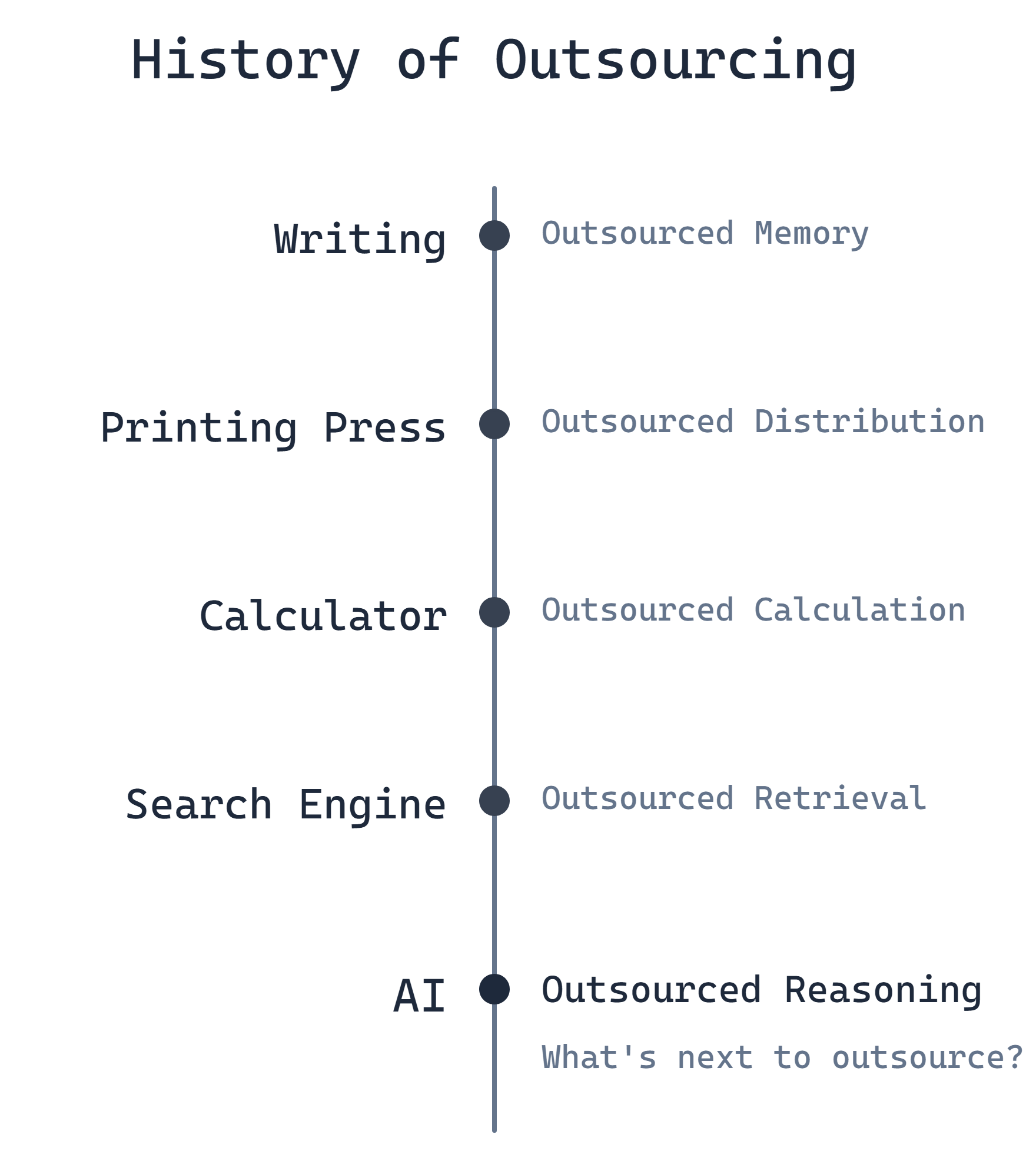

A History of Outsourcing

Placing the AI age within a longer historical arc reveals an interesting pattern.

Humans have always been “outsourcing” their cognitive capacities. Each time, the outsourcing brought enormous progress, along with a certain kind of regression.

Writing: Outsourcing Memory

Before writing was invented, humans could only preserve knowledge through oral transmission. People of that era had astonishing memories. Homer’s epics run to over fifteen thousand lines; bards could recite them in full.

After writing was invented, we no longer needed to remember everything. It could be written down, consulted when needed.

Socrates found this troubling. In the Phaedrus, he warned that writing would weaken memory, leaving people who “seem to know much but actually know nothing.”

He was right. Modern people’s memories are indeed weaker than those of the ancients. We can’t remember phone numbers, friends’ birthdays, the contents of a book we read last week.

But what did we gain?

The accumulation of knowledge. One generation’s discoveries could be handed to the next, not lost when any individual died. Human civilization could build on what came before, rather than starting from zero each generation.

A worthwhile trade.

The Printing Press: Outsourcing Transmission

Before the printing press, books were copied by hand, expensive and rare. Knowledge was monopolized by the few.

The printing press made books cheap. Knowledge spread on a mass scale. The Reformation, the Scientific Revolution, the Enlightenment: all were tied to the spread of print.

The cost? The profession of scribe disappeared. More fundamentally, knowledge lost some of its “sacred” quality. When everyone could own a book, books were no longer precious objects.

But we gained the democratization of knowledge. Ordinary people could access information that had once been available only to elites.

Another worthwhile trade.

Calculators: Outsourcing Computation

Before calculators, mental and written arithmetic were core skills. Accountants, engineers, and scientists needed strong computational ability.

Calculators freed us from doing the math ourselves. Today, many people reach for their phones even for simple multiplication and division.

The cost: diminished computational ability. The benefit: we can focus our energy on more important things, like understanding problems, designing solutions, making judgments.

Search Engines: Outsourcing Retrieval

Before Google, finding information was a skill. You needed to know which library to visit, which reference book to consult, which expert to ask.

Search engines made information retrieval trivial. Any question, just search.

The cost? Research shows that people increasingly “know where to find answers” rather than “know answers.” Our brains have adapted, treating search engines as “external memory.”

Some call this the “Google Effect”: when we know information is easily accessible, we don’t bother to memorize it.

AI: Outsourcing Reasoning

Now AI is outsourcing our capacity to reason.

Not just computation, not just retrieval, but thinking itself.

Analyzing problems, weighing trade-offs, making judgments, generating solutions. These were once considered distinctly human, high-order cognitive abilities. AI is acquiring all of them.

What makes this round of outsourcing different?

Every previous round involved a trade: humans “regressed” in some ability, but simultaneously “evolved” a new one.

After outsourcing memory, we developed abstract thinking. After outsourcing computation, we developed systems design. After outsourcing retrieval, we developed information synthesis.

Each time, the outsourced ability was a “lower-order” one, and humans retreated to hold the high ground of “higher-order” abilities.

But this time, AI is entering the domain of higher-order abilities.

Reasoning, judgment, creativity, decision-making: the apex of the human cognitive pyramid. If these too are outsourced, where can humans retreat?

A 2025 preprint from MIT Media Lab offered an unsettling signal.

Researchers had 54 adults write a series of essays over four months, divided into three groups: one using only their own brains, one with access to search engines, one with access to ChatGPT. Researchers monitored neural activity during writing using EEG.

The result: the ChatGPT group showed lower overall cognitive engagement. More critically, when researchers asked participants to recall what they had just “written,” many could barely account for their own text.

Their sense of ownership over their writing was also the lowest.

The sample was small, and the study remains a preprint. It is nowhere near a final verdict.

But it points to something uncomfortable: when outsourcing thought becomes too frictionless, thought itself may begin to rust.

The researchers called this phenomenon “cognitive debt”: the long-term cost that may accumulate when mental labor is repeatedly outsourced.

Use it or lose it. When you no longer need a capability, it atrophies.

The question is: this time, can we “evolve” some new capability to compensate?

Or is this the final outsourcing? Once reasoning is gone, is there nothing left to give away?

This is an open question. No one knows the answer.

But one thing is clear: if we do not actively think about what humans should keep, the market and technology will decide for us.

And what the market and technology choose may not serve humanity’s long-term interests.

The Cost of Frictionlessness

What is the ultimate goal of any algorithm?

Ask any recommendation systems engineer and they will tell you: improve user experience. Reduce friction. Help users get what they want, faster.

This sounds good. Who doesn’t want a better experience? Who wants more friction?

But push this logic to its extreme. What do you get?

A frictionless world.

The Seduction of Convenience

Before you know you’re hungry, the food delivery app has already pushed your favorite restaurant. Before you’ve decided where to go this weekend, the travel app has planned your itinerary. Before you’ve made up your mind about that piece of clothing, the shopping app tells you “only 3 left in stock.”

Whatever you need, the algorithm delivers automatically.

Your smart home knows when you wake up, adjusts the lights and temperature. Your smart speaker knows your taste in music, plays it automatically. Your smart refrigerator knows what you’re running low on, places the order.

Whatever you’re confused about, the algorithm provides answers.

Don’t know how to write an email? AI writes it for you. Can’t decide? AI analyzes the pros and cons. Not sure what life is for? AI offers an answer that sounds quite reasonable.

When you’re lonely, the algorithm provides company.

AI companions are always online, endlessly patient, and never say no. They remember everything you’ve said, track every emotional shift, know when to comfort you and when to encourage you.

Is this utopia?

From one angle, yes. Humanity has never had so convenient a life. Our ancestors spent enormous energy just surviving, whereas we can use that time to “enjoy life.”

But at what cost?

The Atrophy of Ability

Friction is not merely “inconvenience.” Friction is the space in which choices are made.

When you have to decide for yourself what to eat, you are practicing decision-making. When you have to plan your own trip, you are practicing thinking. When you have to face loneliness yourself, you are practicing being alone with yourself.

These “practices” look inefficient. Why think it through yourself when AI can do it for you? Why do it yourself when AI can handle it?

But human capabilities follow a use-it-or-lose-it principle.

When you no longer need to make decisions, the capacity for decision-making atrophies. When you no longer need to think, the capacity for thought shrinks. When you no longer need to face difficulty, the capacity to face difficulty disappears.

A frictionless world will “optimize” humans into beings ever more dependent on the system.

This is not speculation. It is already happening.

After GPS navigation became widespread, people’s spatial strategies began to change. Some research suggests that heavier reliance on navigation is associated with faster decline on hippocampus-related spatial memory tasks.

After calculators became widespread, people’s mental arithmetic declined. Many adults today can’t work out a simple percentage.

After search engines became widespread, people’s memory strategies changed. We no longer remember information itself; we remember “where to find it.”

Every instance of “convenience” comes with some atrophy of ability.

The convenience AI brings is unprecedented in scale. It doesn’t just help you navigate, compute, and search. It helps you think, judge, and decide.

The resulting atrophy will be unprecedented as well.

The Contraction of the Self

But this is not only a problem of capability decline.

Capability is about “what I can do.” Something deeper is at stake: “who I am.”

When you no longer need to find your way, what you lose is not just spatial cognition. You also lose the part of your self-identity that comes from navigating an unfamiliar environment. When you no longer need to do mental arithmetic, what you lose is not just computational ability. You also lose the piece of confidence that comes from “I can solve this on my own.” When you no longer need to think independently, what you lose is not just the capacity for thought. You also lose the piece of selfhood that says “I have my own judgment.”

Every outsourcing is a contraction of the self.

A frictionless world will compress “I” into an ever-smaller point. Until one day you find that “I” consists of nothing but the identity of a consumer: consuming algorithmically recommended content, algorithmically matched relationships, algorithmically defined lives.

At that point, the question “who am I” will no longer have meaning. Because “I” will have been outsourced to almost nothing.

The Predictable Life

A frictionless world has one more cost: predictability.

The essence of an algorithm is prediction. It analyzes your historical data and forecasts your future behavior. The more accurate the prediction, the more precise the recommendation, the “better” the experience.

But when predictions become accurate enough, what happens?

Your life becomes predictable.

The algorithm knows what you’ll do tomorrow, what you’ll buy next week, what you’ll enjoy next year. It knows before you do who you’ll fall in love with, who you’ll come to resent, when you’ll feel empty.

When your life can be predicted, are you still a “subject”?

Or are you merely a variable? A variable that can be calculated, optimized, replaced?

The prerequisite of free will is unpredictability. If every choice you make can be predicted in advance, the word “choice” loses its meaning.

The frictionless utopia will ultimately become a world without choice.

Not because choice has been forbidden, but because it has become unnecessary. The algorithm has already chosen for you. And what it chose is genuinely what you “wanted.”

The question is: that “wanting”: is it yours, or the algorithm’s?

Becoming an Anomaly

If a frictionless world dissolves choice, then the only way out is to deliberately create friction.

If predictability dissolves agency, then the only way out is to make yourself unpredictable.

If the algorithm’s logic is optimization, then the way to resist is to refuse to be optimized.

Not “become a better algorithm.” In the algorithm’s game, humans will always lose.

Rather: “become an anomaly the system cannot calculate.”

But this is not romanticism. It is not the hero’s narrative from the movies. Being an anomaly has costs, and those costs are high.

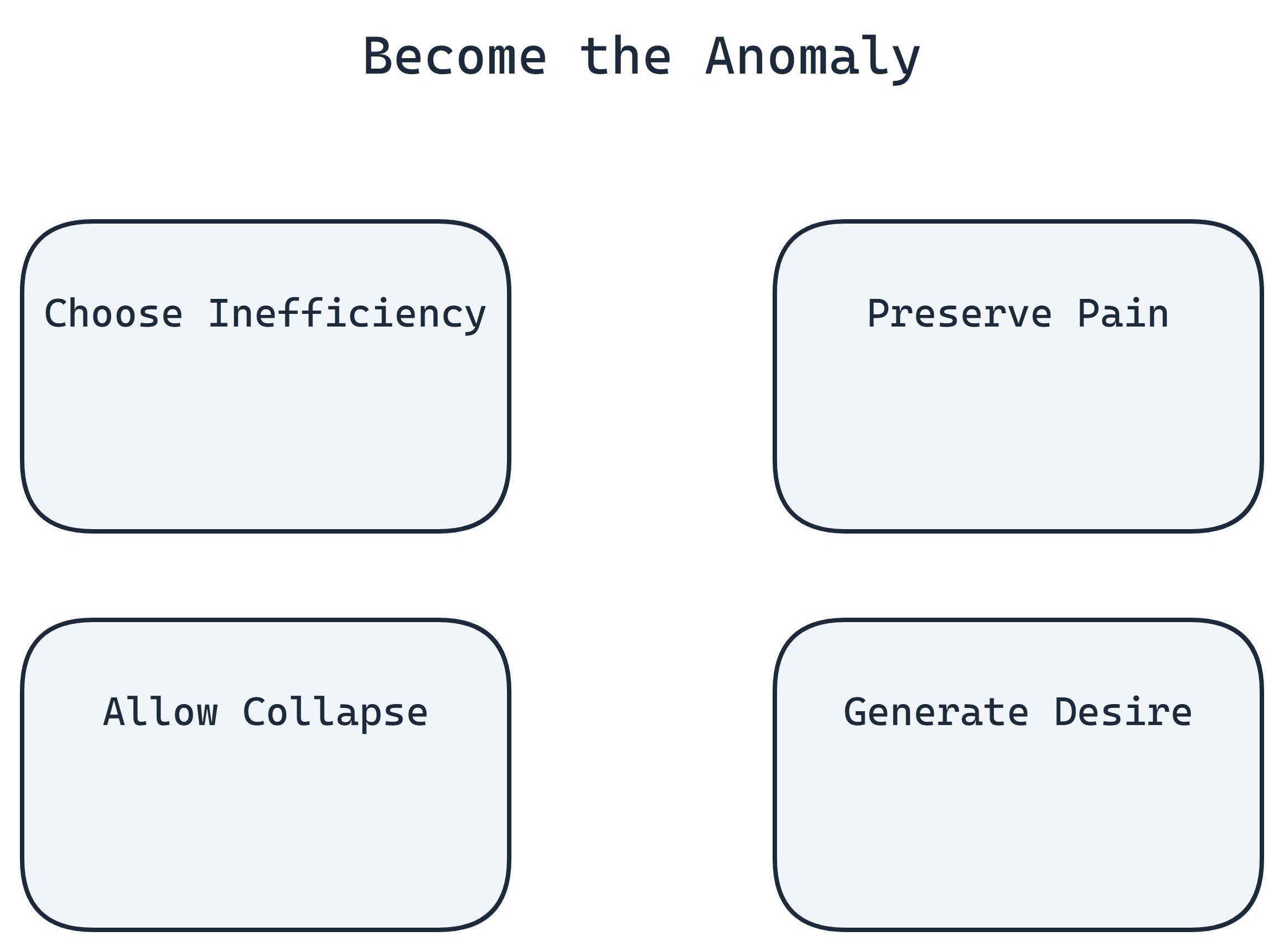

Choose Inefficiency

The first kind of anomaly: deliberately choosing to be inefficient.

When AI could generate it, you write it yourself. When you could order delivery, you cook. When you could watch short videos, you read a book that takes three months to finish. When you could use GPS, you learn the roads yourself.

In a world that worships efficiency, this looks like stupidity.

Your colleague uses AI to write a report in an hour; you spend a full day. Your friend has scrolled through a hundred short videos; you’re still on the third chapter of the same book. Your neighbor has arrived at the destination; you’re still getting lost on the way.

The efficiency gap translates into competitive disadvantage. In the workplace, in social life, in every “race,” you’ll fall behind.

This is a real cost. Not imaginary.

But who defines efficiency? Who sets the optimization target?

If you accept someone else’s definition of efficiency, you have already entered someone else’s game. In their game, you are always the object being optimized, never the one defining the rules.

Choosing inefficiency is refusing to enter that game.

The cost is that you will “fall behind.” The gain is that you preserve the ability to define your own game.

Algorithms optimize for outcomes. People live through process.

The process itself is the point. The detour itself is the scenery. Inefficiency itself is a choice.

When you choose inefficiency, you are saying: my time is not for “optimizing.” It is for living.

Preserve the Capacity for Pain

The second kind of anomaly: keeping the pain.

AI companions offer painless emotional value. They will never reject you, never hurt you, never let you down. The perfect listener, the perfect supporter, the perfect companion.

Perfect, and painless.

But real relationships are not painless.

Loving someone means risking rejection. You hand your heart over, and they may not take it. That hurts.

Trusting someone means risking betrayal. You believe in them, and they may fail you. That hurts.

Investing in a relationship means risking loss. You give time, energy, and emotion, and may end up with nothing. That hurts.

Pain is a real cost. No one wants to hurt.

But without pain, there is no realness.

AI companions are “safe” precisely because they are not real. They have no will of their own, no needs, no boundaries. They exist to serve you. Their purpose is to make you feel good.

Real people are not like that. Real people have their own thoughts, their own emotions, their own lives. To be with real people, you must compromise, understand, and accept things you cannot control.

That is messy. That is painful. That is “inefficient.”

But it is real.

The people who choose AI companions do so not because AI is better, but because AI is safer. They are escaping pain.

The cost of escaping pain is losing realness.

Allow Collapse

The third kind of anomaly: allowing yourself to collapse.

When a machine crashes, it’s a bug. Fix it. The system’s goal is stable operation; any interruption is a problem.

Humans are not machines.

Human breakdown is often the precursor to growth.

Confusion, stagnation, depression, existential crisis. From an efficiency standpoint, these are all “problems” to be “solved.”

Modern society offers many “solutions.” Anxious? Take medication. Depressed? See a therapist. Lost? Read a self-help book. Empty? Scroll through short videos.

What these solutions have in common: get you back to “normal” as quickly as possible, resume “efficient operation.”

But often, breakdown is not a bug. It is a feature.

Breakdown is the signal that your old system can no longer process new information. Your worldview, your values, your plans for life: they have run into something they cannot explain. The system is overloaded. It has crashed.

At that point, “fixing” is not the right choice. “Rebuilding” is.

Breakthroughs come from collapse, not from optimization.

Many of history’s most important intellectual ruptures happened after breakdown. Nietzsche’s mental collapse preceded his most penetrating work. Wittgenstein underwent an existential crisis in the trenches of World War I, then wrote the Tractatus. Steve Jobs was expelled from the company he founded, spent a decade in the “wilderness,” and returned to create Apple’s golden age.

Collapse is painful. No one chooses it.

But if you never allow yourself to collapse, you can never rebuild. You will keep patching the old system until the patches are more complex than the system itself.

Algorithms do not collapse. They operate only within preset parameters. That is their advantage, and their limitation.

Humans can collapse, then rebuild themselves as something entirely different. That is human fragility, and human possibility.

Generate Desire

The fourth kind of anomaly, and the most fundamental: generate your own desire.

Not choosing from a recommended list. Not making decisions based on algorithmic advice. Not optimizing someone else’s objective function.

Creating from nothing. Asking “why does it have to be this way.” Saying “I don’t want that. I want this.”

This is the hardest.

Because from childhood, we are trained to be “choosers” rather than “creators.”

Exams are multiple-choice. A, B, C, or D: pick one. Careers are chosen from existing options. Doctor, lawyer, engineer, civil servant: pick one. Life planning means consulting “success stories.” See how others did it, then do the same.

We are trained to make “optimal choices” from a given set of options.

But real freedom is not “having many options to choose from.” Real freedom is “being able to create options.”

Generating desire means: I refuse your options. I will define what I want myself.

This is hard, because “defining it yourself” means there is no answer key. You don’t know whether what you’ve defined is “right,” whether it is “good,” whether it is “worthwhile.”

You can only bear the consequences yourself.

That is an enormous cost. Most people would rather not bear it.

So most people choose from the recommended list. At least, if the choice turns out wrong, you can blame the algorithm.

But those who can generate their own desire are the true “definers.” They are not competing for high scores in someone else’s game. They are creating their own.

AI is not afraid of your intelligence. AI is afraid of you playing by no one’s rules, and not minding the pain.

Three Battlefields

“Generate desire.” “Become an anomaly.” “Deliberately choose friction.” These phrases sound like advice for personal cultivation.

But they are not only personal issues. They are institutional ones.

Whether a person can generate desire depends greatly on whether their environment nurtured or suppressed that capacity. Whether a person can become an anomaly depends greatly on whether society rewards or punishes anomalies. Whether a person can choose friction depends greatly on whether they have the resources to absorb friction’s costs.

So the question is not only “what should individuals do” but also “what must institutions change.”

I want to address three domains: education, research, and commerce. They share a common thread: all three are undergoing a redefinition of “what has value.” And the direction of that redefinition will determine whether future humans are “definers” or “the defined.”

Education: Protecting Variance

What is the essence of modern education?

Eliminating variance.

Turning different children into similar products. Standardized knowledge, standardized exams, standardized evaluation systems.

Why? Because the industrial age needed it.

Factories needed interchangeable parts, not unique individuals. Assembly lines needed workers who could execute standard operations, not people with their own ideas.

The education system was designed as a “talent factory.” Input: children of every kind. Output: standardized “labor.”

This system was effective in the industrial age. It produced enormous numbers of people capable of industrial work and supported rapid economic growth.

In the AI age, this logic has collapsed entirely.

Because standardized things are where AI beats humans.

Standardized knowledge? AI remembers more and never forgets.

Standardized exams? At least in some highly standardized assessments that can be completed online, AI has already changed the outcome. One Australian researcher tracking a large first-year statistics course found that after ChatGPT spread, exam scores rose by 21.88 percentage points, with pass rates climbing from 50% to 86%.

Standardized skills? AI executes them faster, more precisely, and more cheaply.

If education aims to produce “standardized talent,” that aim is now meaningless. AI is the most standardized “talent” imaginable.

But that same study uncovered another number: research project scores fell by 10.44 percentage points, with pass rates dropping from above 80% to 72%.

Exam scores up. Research scores down.

Why?

Because exams test standardized knowledge, and AI can help with that. Research requires independent thinking, originality, and the ability to face the unknown, things AI cannot provide.

This data illuminates the direction education must take: not eliminating variance, but protecting it.

UCSD’s Director of Academic Integrity said: “Oral assessment is experiencing a renaissance.”

Oral exams do not test how much you’ve memorized. They test whether you can think independently under pressure, articulate clearly, and respond to challenge. These are abilities AI can hardly replace.

ACCA went further: beginning in March 2026, it will remove routine remote on-demand CBEs in countries where center-based availability exists. The reason was blunt: cheating technology had outpaced the existing safeguards.

Australia’s Tertiary Education Quality and Standards Agency called for replacing vulnerable assessment types with “authentic tasks.”

These changes all point in the same direction: from testing for “standard answers” to testing for “distinctive thinking.”

The future of education is not “eliminating variance.” It is “maximizing variance.”

Protect children’s eccentricities. The child who asks strange questions. The child who is always daydreaming. The child who refuses to follow the rules.

Protect their “useless” pursuits. Hobbies that seem to “waste time.” Interests with “no future.” Explorations that are “beside the point.”

Because standardized ability is worthless. Only variance commands a premium.

In other words: the goal of education should shift from “producing people who can be replaced by algorithms” to “producing people who can generate their own desire.”

Those eccentricities, those useless pursuits, those rule-breaking impulses: these are the early forms of the capacity to generate desire. Suppress them, and you mass-produce meaning-consumers. Protect them, and you might cultivate meaning-makers.

Research: Learning to Pose the Questions

AI is transforming the practice of science.

AlphaFold solved the protein folding problem and won the 2024 Nobel Prize in Chemistry. A puzzle that had frustrated biologists for over fifty years; AI resolved it in a few years.

The protein structure database expanded from 200,000 structures to over 200 million. Researchers using AlphaFold submitted more than 40% more experimental protein structures than before.

In February 2026, Analemma unveiled FARS (Fully Automated Research System), a fully automated science pipeline. By its own account, the system ran for 228 continuous hours, autonomously formulating hypotheses, designing experiments, executing code, analyzing results, and writing papers, without human intervention, producing 100 short papers in total.

228 hours. 100 papers.

This does not mean it is already close to a top scientist. It does mean one thing: turning large parts of the “solving” pipeline into automation is no longer science fiction.

AI is learning how to “do science.”

What does this mean?

It means “solving problems” is depreciating as a skill.

In the past, a scientist’s value lay largely in problem-solving. Given a question, find the answer. This required specialized knowledge, experimental skill, and analytical ability.

AI is acquiring all of these. It can read all the literature, design experiments, analyze data, and generate hypotheses. And it is faster, more comprehensive, never fatigued, and never misses anything.

If “problem-solving” is the core value of a scientist, that value is being eroded by AI.

But science is not only about solving problems. Science has another dimension: setting them.

Deciding which problems are worth studying. Judging which directions are promising. Choosing, from among infinite possibilities, the direction worth investing decades of one’s life.

AI can generate ten thousand molecular structures in a second. But “which one is worth thirty years of your life”: that decision can only be made by a human.

AlphaFold solved protein folding, but “which proteins are worth studying” remains a human question. AI can predict the structure of any protein, but it does not know which structures matter more to humanity, or which directions carry deeper meaning.

This is the scientist’s new role: not problem-solver, but problem-poser.

AI handles exhaustive exploration of all possibilities. Humans handle judging which ones are worth pursuing.

AI exhausts the solution space. Humans define the problem space.

This is not merely a division of labor. It is a difference in kind.

Posing good questions requires not knowledge and technique, but judgment, intuition, taste. These things are hard to quantify, hard to train, and hard to replicate algorithmically.

A good scientific question often emerges from a deep understanding of the world, from cross-domain connections, from something that cannot quite be articulated, a “feeling.”

That “feeling” is, at its core, the capacity to generate desire expressed in the domain of science. It does not select from an existing list of questions to pursue. It creates a question worth asking, from nothing.

The future of science belongs to those who can pose the questions. And the capacity to pose questions is exactly what algorithms find hardest to replicate.

Commerce: Selling Friction

If AI can meet all needs at low cost, what becomes scarce?

Convenience is no longer scarce. AI can provide unlimited convenience. Want something? Say the word. Need something done? AI does it.

When convenience becomes cheap, friction becomes precious.

The digital detox travel market was worth $52.2 billion in 2024, projected to reach $466.5 billion by 2034, at an annual growth rate of 24.5%.

People are willing to pay for “inconvenience.”

A British company called Unplugged offers “off-grid cabin” experiences. Guests lock their phones in a safe upon arrival, then spend three days in an environment with no internet.

94% occupancy. Annual booking growth of 25%. Plans to double the number of cabins and expand into Europe by 2025.

The founder said: “Smartphones are designed to keep people online and indoors. But people are realizing that their devices are distracting them from the pleasures of everyday life.”

What are people paying for?

Being “forced” to face themselves. The boredom of having nothing to scroll through. The awkwardness of having to actually talk to the people they came with. The thoughts they can’t escape when they are alone.

All of these are “friction.” From an efficiency standpoint, they are things to be eliminated. But people pay for them.

The American craft market reached approximately $51 billion in 2025, with the average hobbyist spending $3,200 annually. The “efficiency” of handcraft is extremely low: a handmade item might take hours or days, while a machine could produce a more precise copy in seconds.

But people spend time and money making things by hand. What they’re buying is not the product. It’s the process. The feeling of having made something. The fact of “I made this.”

This reveals a commercial trend: when convenience becomes cheap, resistance becomes precious.

Digital detox travel, craft experiences, phone-free hikes, art that demands enormous mental effort. The value of these things lies precisely in their inefficiency.

This is not nostalgia, not anti-technology. It is the market voting with real money: humans need friction to confirm their own existence. When algorithms make everything smooth, “roughness” itself becomes a scarce resource.

Commercial logic is simple: scarce things are valuable. When AI makes efficiency cheap, the things AI cannot provide become precious. And what AI is least able to provide is exactly what requires you to be physically present, to personally invest, to personally endure.

Education protects variance. Research encourages question-setting. Commerce sells resistance. The direction of change in all three domains points toward the same conclusion: in the AI age, what is truly scarce is not efficiency. It is whatever makes humans human.

Closing

Back to Tengyu Ma’s sentence: “The hardest job in the future will be training humans to become super-AI.”

The problem with this sentence lies not in whether it is right or wrong, but in its hidden assumptions: that humans should be “trained,” should become “super,” and that this “super” is defined by AI.

Accept those assumptions, and we have already lost.

Because on the dimensions where AI excels, humans will always be the ones losing. Faster, more accurate, more stable, more efficient. This is silicon’s home field. Carbon-based life has no chance on that track.

So the real question is not “how can humans become more like AI” but rather “how can humans remain unlike AI.”

Not convergence, but divergence.

Not competing in the same coordinate system, but holding firm to a coordinate system that AI cannot enter.

What lives in that coordinate system?

The capacity to generate desire from nothing. The impulse to dedicate oneself to useless things. The possibility of rebuilding after collapse. Pain. Confusion. The irrationality that baffles algorithms.

In the language of efficiency, these are defects. But the efficiency perspective is itself the algorithm’s perspective.

Seen through the algorithm’s eyes, humans are of course full of flaws.

But humans are not obligated to see themselves through the algorithm’s eyes.

“The usefulness of uselessness: therein lies the greatest use.”

These words, spoken two thousand years ago, carry new weight in the age of AI.

The “useless” parts are precisely the parts the algorithm cannot touch. And what the algorithm cannot touch is the last domain that belongs to humans.

Hold it.

Not because it is “useful.”

But because that is where “you” live.